In the dynamic landscape of modern technology, few innovations have shown as much potential to transform various industries as machine learning (ML). From manufacturing to healthcare, retail and finance, many companies are using ML to boost efficiency, automate tedious tasks, and drive groundbreaking discoveries. However, as of today, many machine learning models fail to make it to production, leading to a lot of missed opportunities. This is where the concept of MLOps steps in to bridge the gap between prototype and production.

MLOps, short for Machine Learning Operations, is a paradigm that seeks to streamline and optimize the end-to-end machine learning lifecycle. At its core, MLOps revolves around teams collaborating to build, test, automate and monitor their machine learning pipelines.

In this article, you will get to know the challenges of ML projects and find out how MLOps principles can help solve them. At the end, you’ll find an overview of the most relevant MLOps tools and their features to enable you to find the setup that best fits your needs.

The Machine Learning Lifecycle

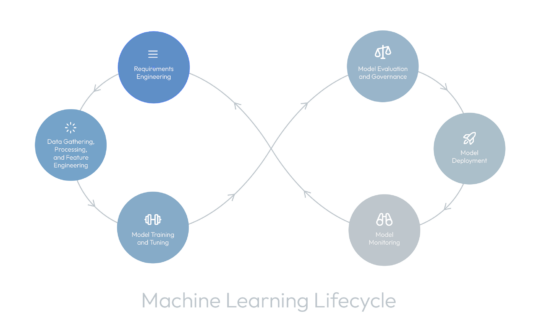

Machine learning projects typically consist of several phases. They can involve different persons or teams, such as Data Engineers, Data Scientists, Software Engineers, or DevOps Engineers. The project phases are not strictly linear but may require iterations or revisits, for example, due to changing input data or project requirements. The following figure illustrates the process:

Let’s have a deeper look at the phases:

- Requirements Engineering: Define the objectives, scope, and success criteria of the project, as well as any constraints or limitations.

- Data Gathering, Processing, and Feature Engineering: Collect relevant data sources, preprocess the data to handle missing values and outliers, and engineer features to make them suitable for training ML models.

- Model Training and Tuning: Select appropriate ML algorithms and train models using the prepared data.

- Model Evaluation and Governance: Assess the performance of trained models using different evaluation metrics and validation techniques. Ensure model fairness, transparency, and compliance with regulations.

- Model Deployment: Deploy the trained models into production environments where they can generate predictions or make decisions.

- Model Monitoring: Continuously monitor the deployed models to detect performance degradation, data drift, or other anomalies.

Each of these phases presents unique challenges. Let’s look for help.

Benefits of MLOps Principles and Tools

MLOPs principles and tools can support in any of the above phases to make them more efficient and scalable. This reduces risks, ultimately driving business value and competitive advantage. MLOps can, for example, facilitate:

- Versioning of Data and Code: Version control is critical for ensuring reproducibility and traceability in machine learning projects. By versioning data and code and assuring identical runtime environments, organizations can track changes over time, roll back to previous versions if necessary, and collaborate more effectively across teams.

- Experiment Tracking: Since developing machine learning projects requires conducting multiple experiments using different models, parameters, or training data, it’s easy to lose track of the experiments you have performed. Experiment tracking platforms store all the necessary metrics and metadata to facilitate optimization and troubleshooting.

- Hyperparameter Optimization: Most ML models have different hyperparameters, e.g., the learning rate of a neural network or the number of branches of a decision tree. Hyperparameter tuning tools help you pick hyperparameter values that optimize performance.

- Model Registry: An ML model can go through different stages during its lifecycle, from development to staging, to production, to being archived. Model registries facilitate this process by providing possibilities to add model versions, tags, or aliases.

- Model Deployment: If an ML model doesn’t get deployed into production, it’s of no use to the organization. By automating tasks such as code integration, testing, and deployment, CI/CD tools enable organizations to iterate rapidly and deploy changes with confidence.

- Model Monitoring: The performance of ML models often degrades post-deployment due to changes in input data. Model monitoring tools help to detect shifts and anomalies, to send out alerts automatically.

- Automated model retraining: If a data drift is detected during the model monitoring phase, the model needs to be retrained. Tools for automated model retraining simplify this process.

Overview of Tools

From open-source platforms such as MLFlow and Kubeflow to commercial solutions such as Weights & Biases and Amazon SageMaker, organizations have a wealth of options at their disposal to support their MLOps initiatives. Here’s an overview of some of the platforms and their capabilities:

| Name | Versioning of data, and code | Experiment tracking | Hyperparameter optimization | Model registry | Model Deployment | Model monitoring & automated model retraining | Open source | Active community | Website |

|---|---|---|---|---|---|---|---|---|---|

| Amazon Code Pipeline | no | https://aws.amazon.com/de/codepipeline/ | |||||||

| Amazon SageMaker | no | https://aws.amazon.com/de/sagemaker/ | |||||||

| Apache Airflow | yes | https://airflow.apache.org/ | |||||||

| Azure ML + Azure DevOps Pipelines | no | https://azure.microsoft.com/de-de/products/machine-learning | |||||||

| Comet ML | no | 450 customers | https://www.comet.com/ | ||||||

| Data Version Control (DVC) | train/test data | yes | https://dvc.org/ | ||||||

| Databricks managed MLflow | no | https://www.databricks.com/product/managed-mlflow | |||||||

| GCP – Vertex AI | no | https://cloud.google.com/vertex-ai?hl=de | |||||||

| Hydra | yes | https://hydra.cc/ | |||||||

| IBM Cloud Pak for Data(IBM Watson Studio) | no | https://www.ibm.com/products/watson-studio | |||||||

| Kubeflow | yes | https://www.kubeflow.org/ | |||||||

| MLFlow | model + code | no automation | yes | (16 mio monthly downloads) | https://mlflow.org/ | ||||

| TensorFlow Extended (TFX) | yes | https://github.com/tensorflow/tfx | |||||||

| Triton Inference Server | yes | https://www.nvidia.com/de-de/ai-data-science/products/triton-inference-server/ | |||||||

| Weights & Biases | no automation | no | 800,000 users | https://wandb.ai |

Wrapping Up

Conducting a successful machine learning project is not easy. Projects typically have an iterative nature and involve professionals with several different roles. There’s no one-size-fits-all solution for every project or company. From the inception of machine learning prototypes to their seamless integration into production, MLOps enables efficiency, reproducibility, scalability and innovation in your projects. There are a plethora of tools that assist you to solve your individual problems, and selecting the right subset can make a crucial difference in a project.

Follow our journey and stay tuned for further posts that will cover our take on a tool selection, as well as an example LLM project that fully uses MLOps.

Let’s build something great together

If you’ve got a vision for your business and you think that bespoke software is the answer, we should talk. Find out more about us below, or get in touch. We’d love to talk.

Get in touch